Notes from the 2026 IMM Convening in Montreal

May 2026

Author: Kristina Kuznetsova leads impact measurement and management at Sarona Asset Management, working across emerging and frontier market portfolios. She is an Investment Committee member at the Waterloo Region Community Foundation and a member of CAFIID.

On March 17-18, about a hundred practitioners working in impact measurement and management (IMM) gathered in Montreal for what is probably my favourite kind of professional event: no keynote speakers, no polished panel presentations, no passive audience.

I have been to enough conferences to know that the hallway conversations are usually better than the sessions. This event made the hallway conversations the whole thing. There was very little room to hide behind jargon — sitting around the same small table, people wanted to know how things are actually done and what results they lead to. The moment someone dropped terms like "intersectionality" or "climate action" without additional context, the table asked follow-up questions.

"Who are we doing this for?" - This question framed the entire convening, and it reframes every technical debate about measurement that follows.

This blog offers reflections and prompts from the IMM conversations rather than a detailed analysis of each topic. Five themes are included, selected based on relevance to my work and interests, and part two may follow. The event was organized and hosted by Fondaction, The Global Impact Investing Network, Impact Frontiers, Impact Principles.

1. The IMM field is tired of talking. It wants to act.

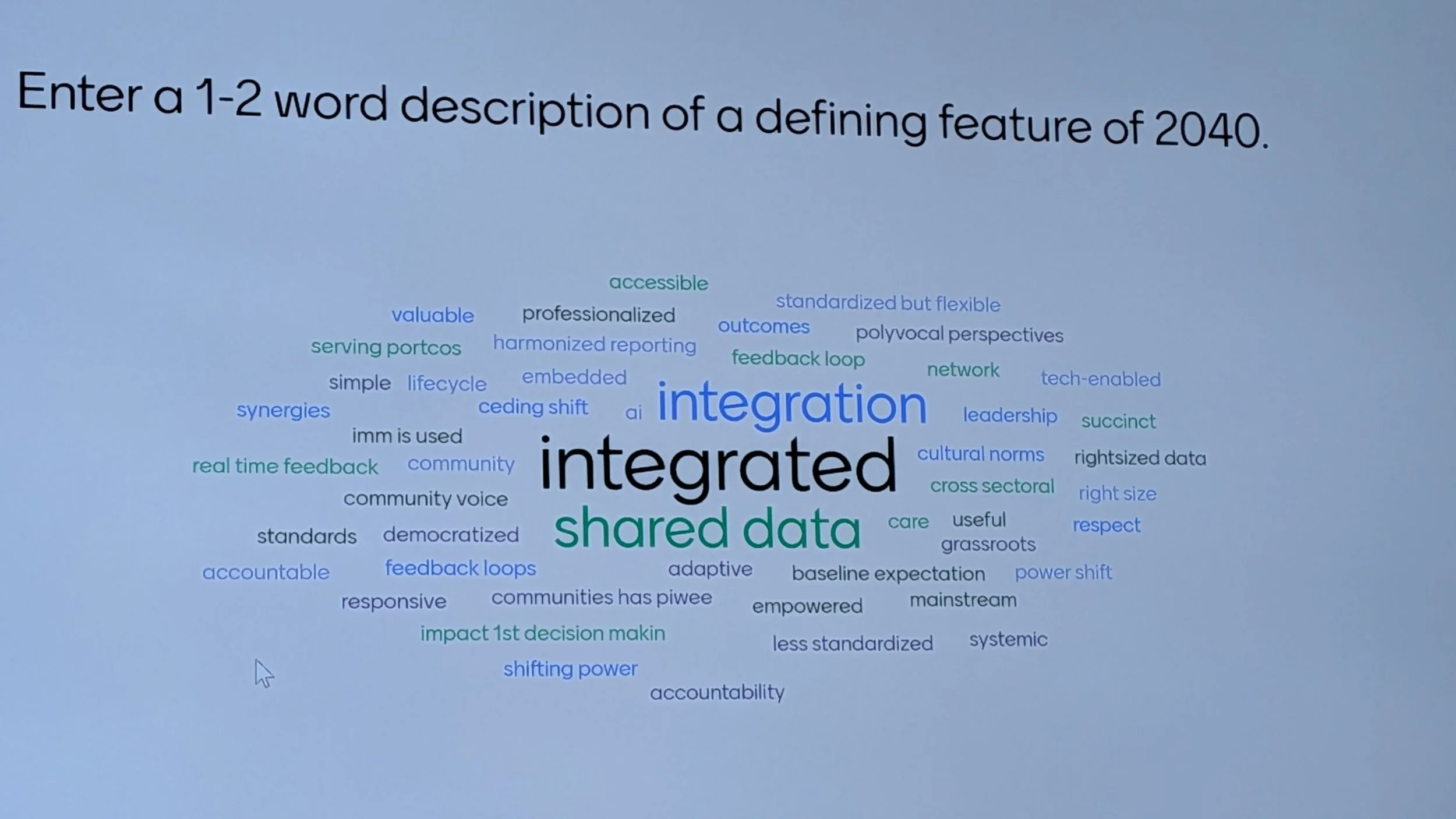

What effective IMM looks like — and what it needs to look like by 2040.

One of the first sessions asked participants to imagine what good impact measurement and management would actually look like fifteen years from now. The consensus picture was about integration:

IMM is integrated into all financial decisions, not limited to impact investors — it is embedded across mainstream finance (banks, credit unions, private equity)

Impact data is actively used by investment teams, treasury, and leadership in real decision-making, not just for reporting or marketing

Frameworks are simple and practical, focused on a limited set of useful metrics rather than complex, layered methodologies

Impact targets are linked to financial incentives (e.g., carried interest, investment outcomes), making impact a core business strategy, not a compliance checkbox

Several persistent tensions were identified as obstacles to progress:

Data access vs. privacy: better end-client data is needed, but privacy and ethical data use are genuine constraints

Data collection vs. usability: large volumes of data are gathered but rarely used effectively in decisions

Fragmented investor reporting requirements: multiple investors request different data from the same portfolio companies, creating duplication and burden

Double counting of impact: outcomes are often attributed across multiple investors, reducing credibility

"Fluffy" perception: a persistent gap between theoretical frameworks and practical application in investment contexts

2. Most impact washing is unintentional, which makes it harder to fix.

How impact washing happens, why it persists, and what the field is doing about it.

The field is concerned about impact washing, but a key point raised was that it is usually not intentional or purely driven by marketing. It more often stems from unclear definitions, Limited Partners/investors pressure, limited data, or the inherent difficulty of measuring outcomes rigorously.

Impact washing can occur at any or all of the stages of the investment lifecycle: product design, investment strategy, active management, and reporting/disclosure.

Common issues identified include:

Overstated additionality

Double-counting outcomes across multiple investors in the same deal

System-level claims based on company-level data

Outputs presented as outcomes

Harm mitigation framed as positive impact

The distinction that resonated most with me: strong IMM practices reduce the risk of impact washing, but they do not automatically maximize impact. Good measurement and meaningful outcomes are related goals, but they are not the same thing. The field has to hold both simultaneously.

The practical message for the professionals is to read your reports and investment memos like a skeptic. Flag anything that relies on weak attribution, outputs dressed as outcomes, or claims that stretch beyond your actual area of interest.

3. If no one uses the data, you are not doing IMM. You are doing admin.

The limits and trade-offs of measurement.

Impact measurement is not free. It takes time, money, and capacity — from fund managers, from portfolio companies, and from the communities participating in the measurement activity. In emerging markets in particular, where those resources are already stretched, there is a real risk that the burden of measurement undermines the very enterprises the investment is meant to support.

The concept that resonated most in this session was right-sizing: IMM expectations should be proportional to fund size and enterprise maturity. Lighter requirements for seed-stage companies, more rigorous expectations for growth-stage, with explicit clarity on who pays for the technical assistance to make it work.

Several practical warning signs were offered. If it takes more than three sentences to explain what you are measuring and why, that is a red flag. If your frameworks are disconnected from the lived experience of the borrowers or communities participating in the measurement, that is a red flag. And if you are collecting data that no one actually reviews when making decisions, that is arguably the biggest red flag of all — it means measurement has become a reporting exercise rather than a management tool.

"Utilization-focused reporting — measuring what actually gets used in decisions — is more valuable than exhaustive data collection."

The question I kept returning to: what would we stop measuring if we were honest about what we actually use? My instinct is that most organizations would end up with a shorter list — and a more useful one.

4. Stop choosing between standardized and customized data frameworks. You need both.

Standard vs. customized data collection — when each approach serves the work.

This session was structured as a genuine debate, and I appreciated that it did not pretend there was a clean answer. Both approaches have real advantages and real risks, and the field tends to oscillate between them rather than holding both.

The case for standardized indicators is straightforward: it is accessible to Limited Partners (investors) and non-impact professionals, it enables comparability across a portfolio, it scales with regulation, and it is cost-efficient. For fund-of-funds structures working across multiple markets and mandates, standardization is also how you make aggregated data legible. The risks are equally real: standardization can treat people as numbers, bias investment decisions away from deals that do not fit pre-set categories, and create reporting burdens that are disproportionate for early-stage enterprises in data-constrained markets.

The case for customized indicators is that it is more relevant to the investee and the beneficiary, enables better active management, generates stronger narratives, and tends to produce higher quality information. The downside is that it is hard to aggregate, easy to cherry-pick, and generates one-off learnings that are difficult to generalize across a portfolio or report credibly to LPs.

The practical recommendation that came out of the session was a genuinely useful reframe: mixed methods, not a compromise between the two. A small set of standardized metrics that enable comparability, combined with qualitative data and end-clients/communities narratives that bring the numbers to life. The warning signs of imbalance are worth keeping in mind: incomplete datasets that cannot be aggregated, indicators that are disconnected from what beneficiaries actually experience, and data collection processes that impose a burden with no visible benefit to the enterprise or the communities who participate in the data collection activity providing the data.

5. AI might make IMM sharper

AI in IMM - where it is being applied and what risks need managing.

The AI applications that are in use include due diligence tools that embed historical investment knowledge to screen deals and structure unorganized data, operational automation for investment memos and benchmarking, and qualitative integration tools that process interviews and customer feedback into impact assessments. The concept I found most interesting was an AI Investment Committee member — not a replacement for human judgment, but a tool designed to challenge assumptions and surface blind spots before a real Investment Committee (IC) meeting. The hardest part of investment decision-making is often not the analysis itself but knowing what you are not seeing, and a tool built specifically to stress-test impact assumptions has a different value proposition than a reporting dashboard.

The risks that were listed:

Data privacy and confidentiality are a constraint, particularly with sensitive portfolio company data that should not be fed into external models, like public generative AI chatbots, without proper governance.

AI model bias is a structural concern: AI systems inherit the biases of their training data, and in impact investing, that data skews heavily toward developed markets and established asset classes. Most publicly available financial, ESG, and company-level datasets are dominated by large-cap, listed companies in North America and Europe. Private markets, small and growing businesses, and emerging and frontier market contexts are chronically underrepresented. An AI tool trained on this corpus will produce outputs calibrated to that reality - not to the markets where impact capital is most needed.

Output reliability matters too: hallucinations and overconfident projections become serious problems when the stakes involve capital allocation decisions.

For climate-aligned investors, the environmental footprint of AI deployment is not an abstract concern - data centres powering AI systems are among the fastest-growing sources of electricity demand and water consumption globally, often in jurisdictions where that grid is still carbon-intensive.

The practical guidance from the session was consistent: build controlled internal environments before opening AI tools to broader use, limit sensitive data in prompts, and approach the buy vs. build question with genuine openness rather than defaulting to either. A hybrid approach seems to be where most organizations are landing. There is also a clear field-level need for a shared prompt engineering guide tailored to IMM workflows.

AI is entering the IMM toolkit whether we are ready or not. The organizations getting value from it are the ones that started small, kept sensitive data out of external models, and used it to challenge assumptions rather than automate conclusions.

What I am taking back:

IMM needs to move from reporting to decisions.The shift from “measuring impact” to embedding impact into how capital is allocated and managed.That means: impact data informs investment decisions, incentives (like carry*), and portfolio strategy — not just LP reporting.

Impact washing is usually an editing problem, not an ethics problem. Review your LP communications like a skeptic. If a claim relies on weak attribution, outputs dressed as outcomes, or system-level change from company-level data — fix the language before someone else flags it.

Keep IMM simple enough to explain in 3 sentences. If we cannot explain what we measure and why in simple terms, it is probably too complex to be useful.

*Carry or carried interest - A share of profits earned by a fund manager (general partner) as performance compensation. It is a standard feature of private equity fund structures.